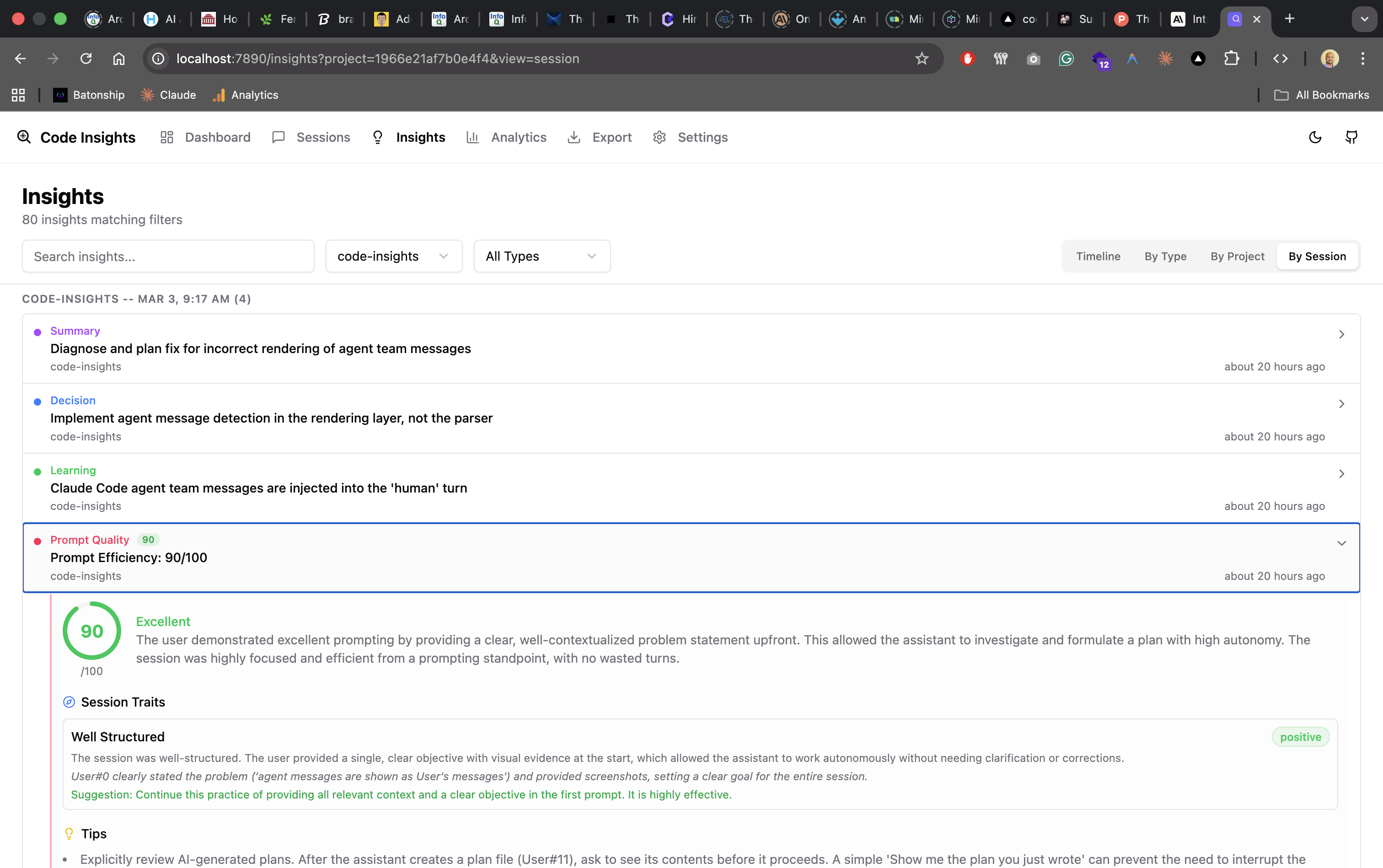

Insights & Analysis

Use LLM-powered analysis to extract decisions, learnings, techniques, and patterns from your AI coding sessions. Supports OpenAI, Anthropic, Gemini, Ollama, and llama.cpp.

What are Code Insights insights?

When you analyze a session, the CLI server sends the conversation to your configured LLM provider and extracts structured insights. These are stored in the local SQLite database and displayed in the dashboard.

The analysis uses a "senior staff engineer writing for a knowledge base" persona — each insight is written as if a future developer will read it 6 months from now with no context about the session.

What types of insights are extracted?

| Type | What it captures |

|---|---|

| Summary | Narrative of what was accomplished, outcome status (success/partial/abandoned/blocked), and key artifacts changed |

| Decision | A technical choice with full context: situation, reasoning, rejected alternatives, trade-offs, and conditions for revisiting |

| Learning | A technical discovery: observable symptom, root cause, transferable takeaway, and when it applies |

| Technique | A reusable approach or pattern demonstrated |

| Prompt Quality | Five dimension scores (0–100), deficit and strength findings, and before/after takeaways |

Decision structure

Each decision insight includes:

- Situation — what problem or requirement led to the decision

- Choice — what was chosen and how it was implemented

- Reasoning — the key factors that tipped the decision

- Alternatives — rejected options with reasons why

- Trade-offs — what downsides were accepted

- Revisit when — conditions under which to reconsider

Learning structure

Each learning insight includes:

- Symptom — what went wrong or was confusing

- Root cause — the underlying technical reason

- Takeaway — the transferable lesson for similar situations

- Applies when — conditions under which this knowledge is relevant

How do I run analysis on a session?

- Open the dashboard:

code-insights dashboard - Navigate to Sessions

- Click into any session

- Click Analyze (requires LLM configured in Settings)

Analysis runs server-side via the Hono API. Progress is streamed back to the dashboard in real time via Server-Sent Events (SSE), so you can see each insight as it's extracted. Your API key stays on your machine and is never sent anywhere else.

During analysis, the LLM also classifies the session into one of 7 character types (deep_focus, bug_hunt, feature_build, etc.) and writes it back to the session record.

How do I analyze multiple sessions at once?

From the Dashboard page, click Analyze on the unanalyzed sessions card to run analysis across all pending sessions. You can also analyze individual sessions from the session detail page. Progress is shown in real time via SSE streaming.

How does prompt quality scoring work?

The prompt quality analysis evaluates your prompting across five dimensions, each scored 0–100.

Dimension scores

| Dimension | What it measures |

|---|---|

context_provision | How well you provided upfront context before making requests |

request_specificity | How precisely and specifically you stated what you needed |

scope_management | How well you kept requests focused without drifting |

information_timing | Whether key constraints were given early vs. revealed late |

correction_quality | How effectively you guided the AI when corrections were needed |

Deficit categories

These categories flag problems in your prompting behavior:

| Category | What it flags |

|---|---|

vague-request | Request lacked enough specificity for the AI to act correctly |

missing-context | Necessary background or file context was absent |

late-constraint | Key requirements were revealed after work had already started |

unclear-correction | A correction redirected the AI but didn't explain what was wrong or what to do instead |

scope-drift | The session covered too many unrelated objectives |

missing-acceptance-criteria | No clear definition of "done" was given |

assumption-not-surfaced | An implicit expectation wasn't stated, causing misalignment |

Strength categories

These categories flag effective prompting behavior worth repeating:

| Category | What it means |

|---|---|

precise-request | Request was specific and actionable from the start |

effective-context | Relevant background and constraints were provided upfront |

productive-correction | Corrections were clear and led directly to better output |

Two-layer output

Each prompt quality analysis produces:

- Takeaways — user-facing before/after examples shown on the Insights page, one per significant finding

- Findings — categorized items (deficit or strength) used for Reflect aggregation across sessions

The Reflect pipeline aggregates findings across weeks to show recurring prompting patterns over time.

LLM analysis cost tracking

Every analysis call (session insights, prompt quality, facet extraction) records its token usage and estimated cost in the analysis_usage table. This lets the dashboard show you exactly what each analysis cost — broken down by analysis type, model, and session.

The cost display appears in the session detail panel next to each analysis result. Costs are estimates based on public provider pricing and may differ slightly from your actual bill.

Where are insights stored?

All insights are stored in the insights table in ~/.code-insights/data.db. You can export individual sessions as Markdown from the session detail panel, or use the Export page to generate AI-synthesized knowledge across sessions. See Analytics & Export for details.