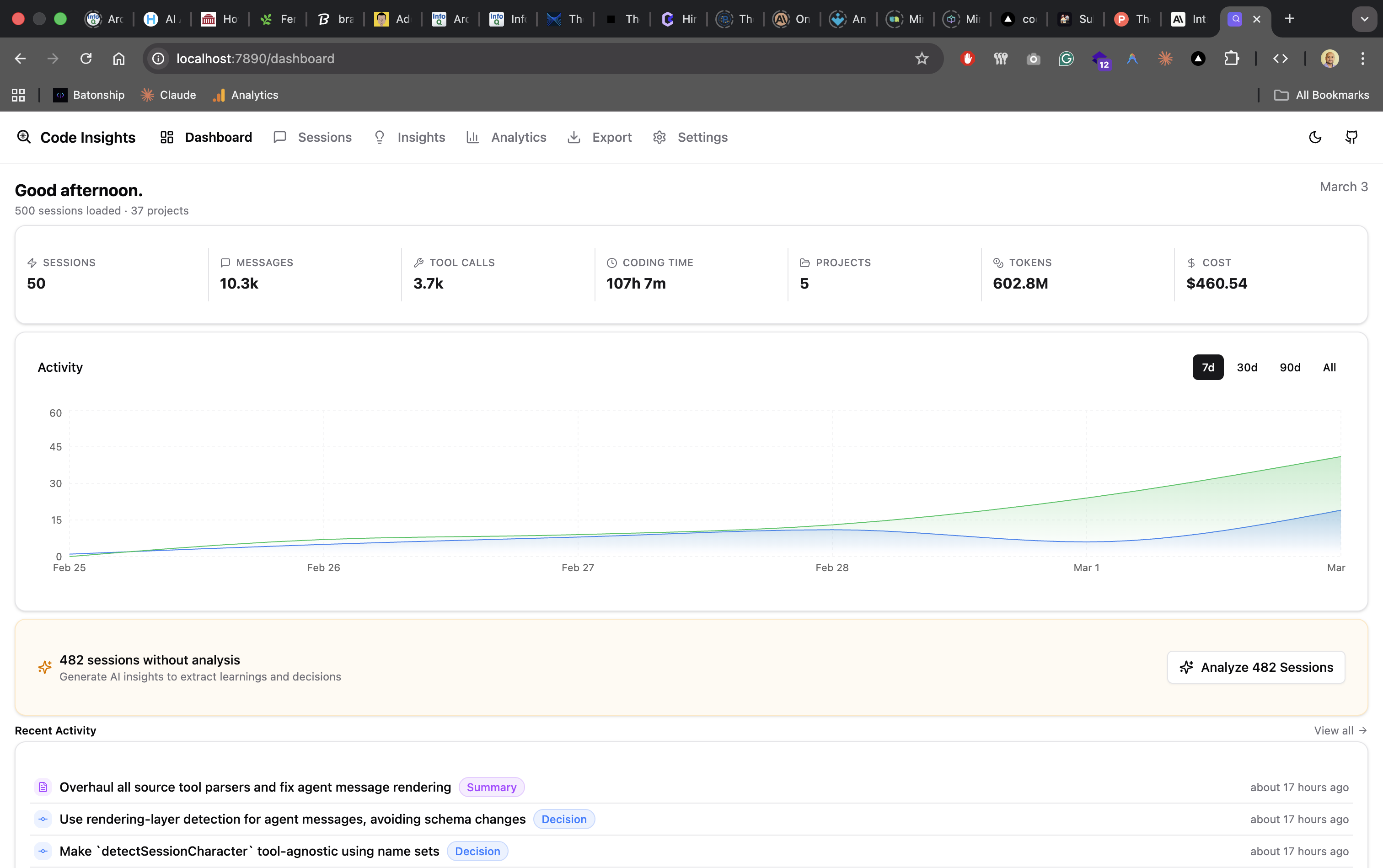

The Dashboard

Tour the Code Insights embedded dashboard — session list, activity charts, project stats, insights, and chat view, all served locally at localhost:7890.

Launching the dashboard

code-insights dashboardOpens your browser at localhost:7890. The dashboard is a Vite + React SPA served by the Hono server embedded in the CLI. It runs as long as the terminal process is alive.

Navigation

| Page | What it shows |

|---|---|

| Dashboard | Activity chart, session stats, unanalyzed session count, bulk analyze button |

| Sessions | Three-panel session browser with project nav, session list, and detail panel with Insights/Prompt Quality/Conversation tabs |

| Insights | All AI-generated insights in a compact, collapsible list with Timeline/By Type/By Project/By Session views and recurring pattern detection |

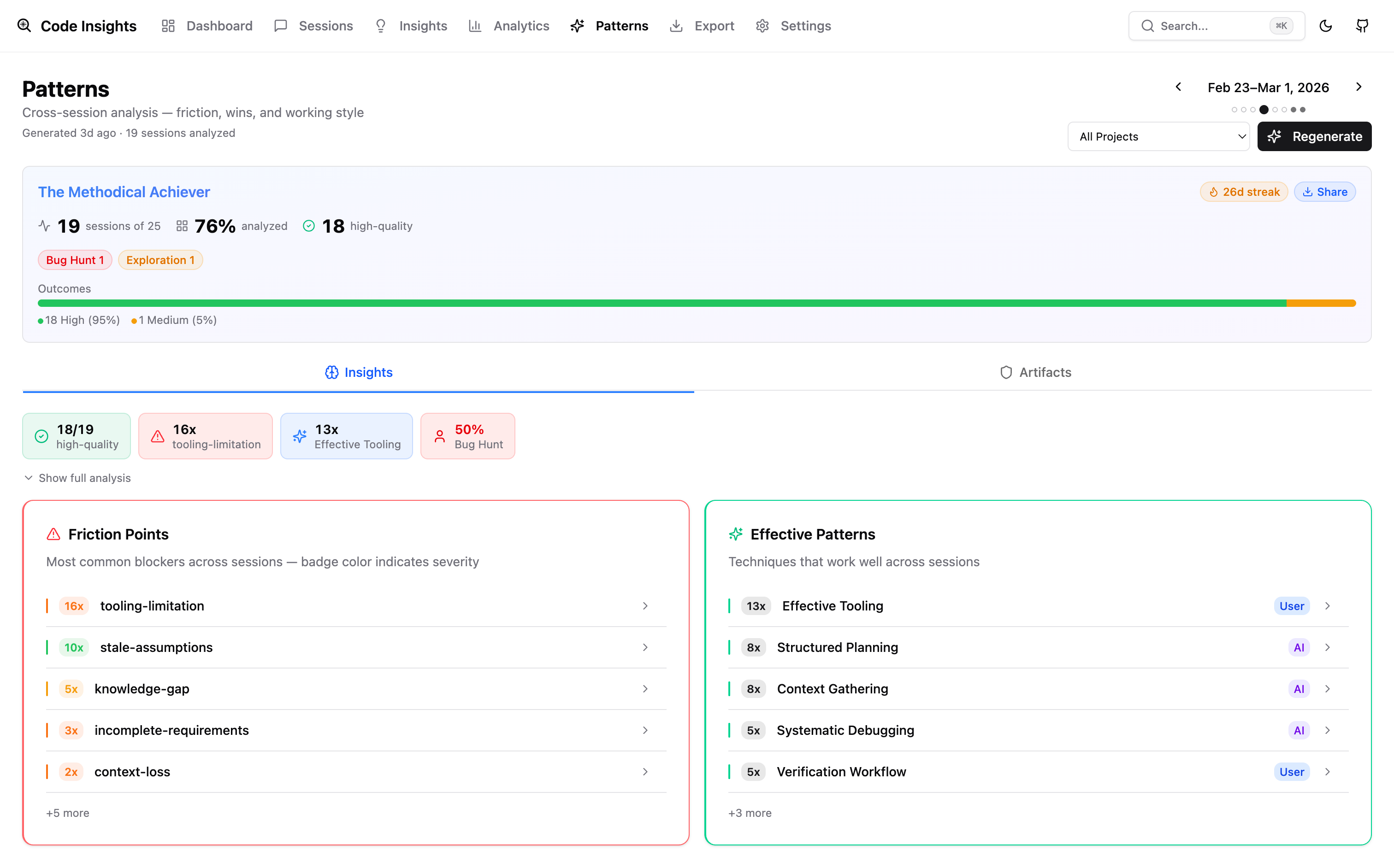

| Patterns | Weekly Reflect synthesis: friction breakdown, effective pattern summary, and CLAUDE.md rule suggestions with ISO week navigation |

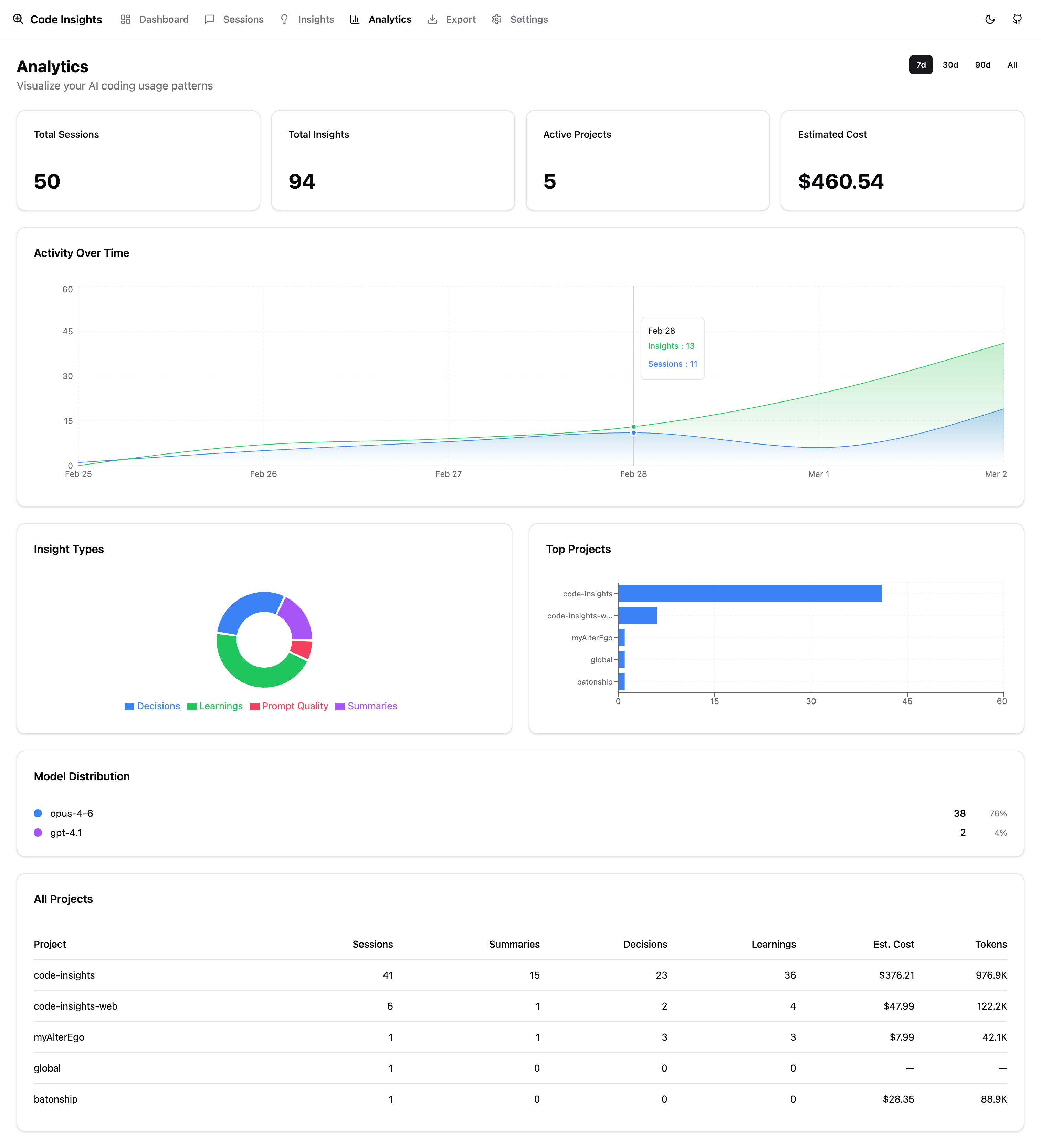

| Analytics | Charts: activity over time, model usage, cost breakdown, project table |

Patterns

Analytics

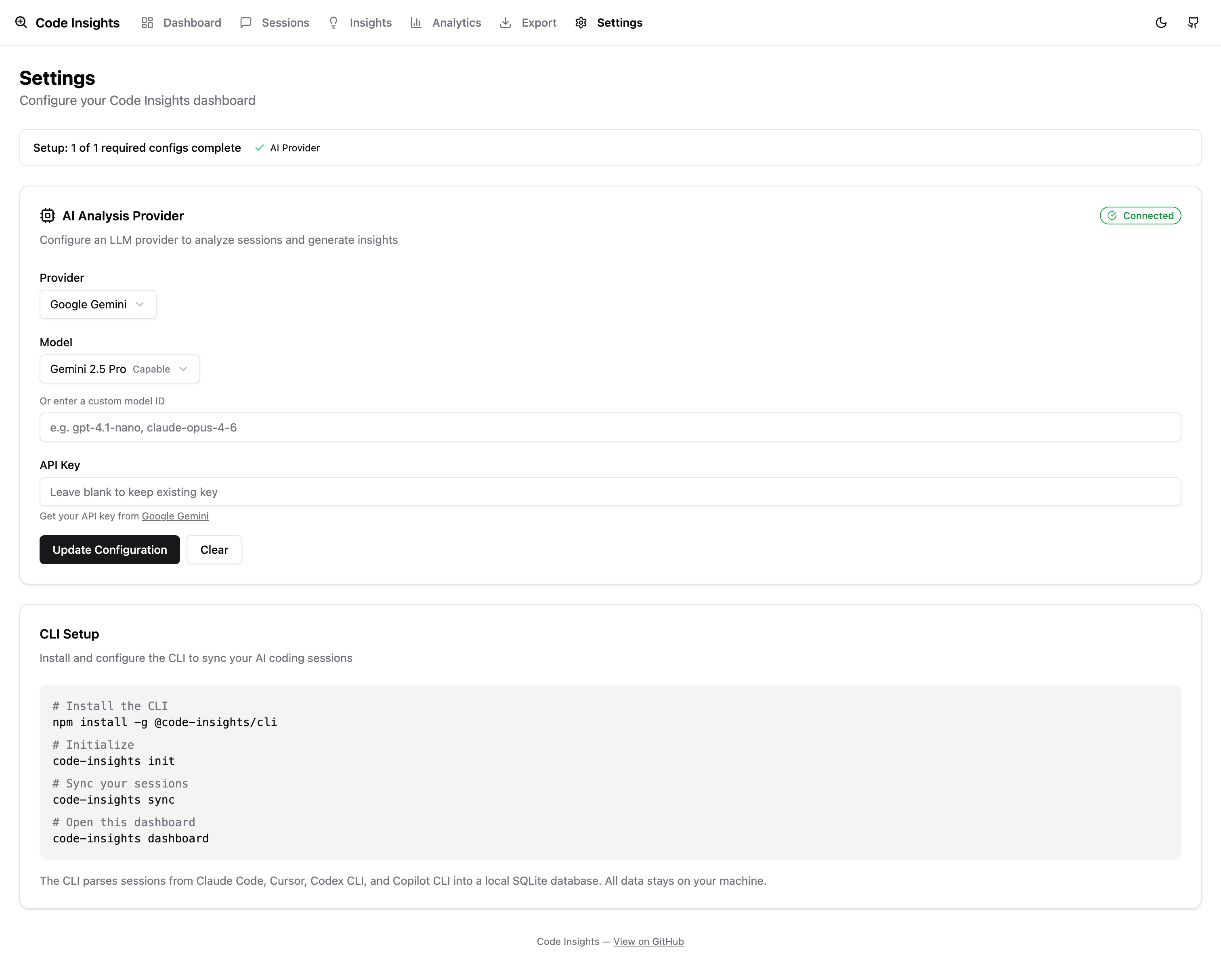

| Export | Download sessions and insights as Markdown (by project or date range) | | Settings | LLM provider configuration with credential testing and Ollama model discovery |

Session management

Sessions with 2 or fewer messages (trivial abandoned prompts) can be hidden from the session list using the soft-delete / trash feature. Hidden sessions don't appear in the dashboard or stats but remain in the database and can be restored with code-insights sync --force. Use code-insights sync prune to batch-hide trivial sessions from the CLI.

LLM configuration

To enable AI-powered insights:

- Open Settings in the dashboard

- Select a provider: OpenAI, Anthropic, Gemini, or Ollama

- Enter your API key

- Return to any session and click Analyze

Ollama runs fully locally — no API key required. Install Ollama and start a model:

ollama pull llama3.2Then select Ollama in Settings and enter your model name.

Port configuration

If port 7890 is already in use, the dashboard will fail to start. Check what's using the port:

lsof -i :7890